Machine learning for novices Part 2

Unsupervised learning

Interesting situation is with unsupervised learning where unknown to us the “right answers”. Let us know about the height and weight a certain number of people. You want to group the data into 3 categories, for each category of people to produce a shirt of a suitable size. Such a task is called task clustering.

Clustering 3 cluster. Note the division into clusters is not so clear and there is no single “correct” division.

Another example is the situation when every object is described, say, 100 features. The problem with these data is that to build a graphical illustration of such data, to put it mildly, difficult, so we can reduce the number of signs to two or three. Then you can visualize data on a plane or in space. This problem is called the problem of reduction of dimension.

Classes of machine learning problems

In the previous section we gave several examples of machine learning tasks. In this we will try to generalize the category of such tasks, accompanied by a list of additional examples.

Regression task: on the basis of various characteristics, to predict material response. In other words, the answer may be 1, 5, 23.575, or any other real number, which, for example, can personify the price. Examples: the prediction value of the shares after six months, a prediction of the profits of the store in the next month, the prediction of wine quality in a blind test.

The problem of classification: on the basis of various characteristics, to predict the categorical response. In other words, answers in this problem a finite amount, as in the case of determining whether a patient has cancer or determining whether the email is spam. Examples: text recognition on the handwritten input, the determining is in the picture man or the cat.

Task clustering: dividing the data into similar categories. Examples: a partitioning of the customers of the mobile operator on pay, splitting of space objects on a similar (galaxies, planets, stars and so on).

The problem of reduction of dimension: to learn to describe our data are features, and a smaller number (usually 2-3 for subsequent visualization). As an example, in addition to the need for imaging can result in data compression.

The task of anomaly detection: based on the features to learn to distinguish to distinguish anomalies from a “non-anomaly”. It seems that the classification task, this task is no different. But the feature of anomaly detection is that of anomaly examples for training the model we have either very little or not at all, so we can’t solve this problem as a classification problem. Example: identifying fraudulent credit card transactions.

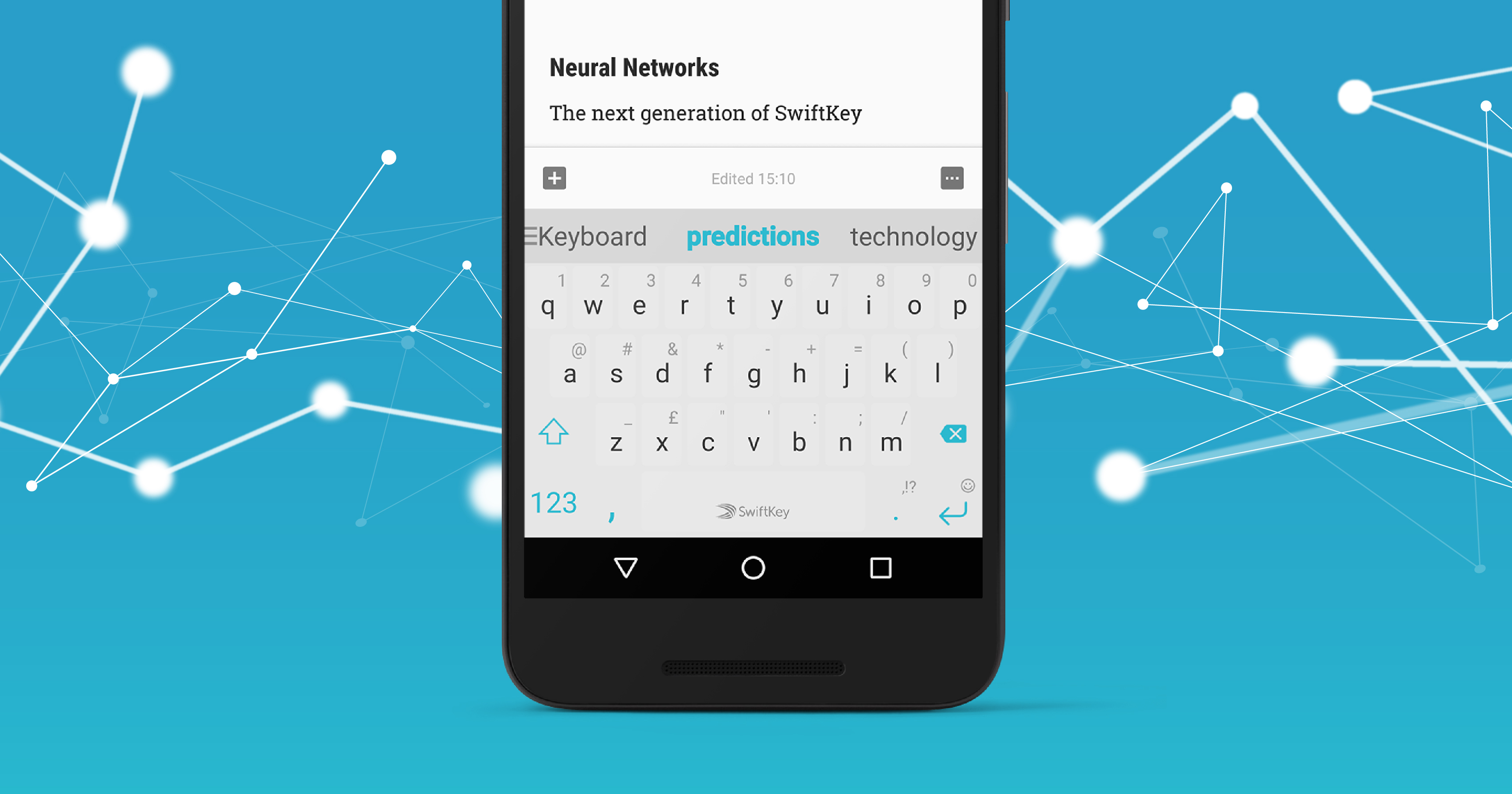

Neural network

In machine learning there are a large number of algorithms, some are fairly universal. As examples are support vector machines, boosting trees over crucial or the same neural network. Unfortunately, most people are pretty vague idea of the essence of neural networks, attributing to them properties they do not possess.

A neural network (or artificial neural network) is a network of neurons where each neuron is a mathematical model of a real neuron. Neural networks have begun to enjoy great popularity in the 80s and early 90s, but at the end of the 90s, their popularity plummeted. However, in recent years is one of the advanced technologies used in machine learning applied in a huge number of applications. Return reason of popularity is simple: increased computational power of computers.

Using neural networks to solve at least the tasks of regression and classification and to build extremely complex models. Without going into mathematical details, we can say that in the middle of the last century.

In fact, a neuron in an artificial neural network is a mathematical function (e.g., sigmoid function), where input comes some value and the output is the value obtained by using the same mathematical function.